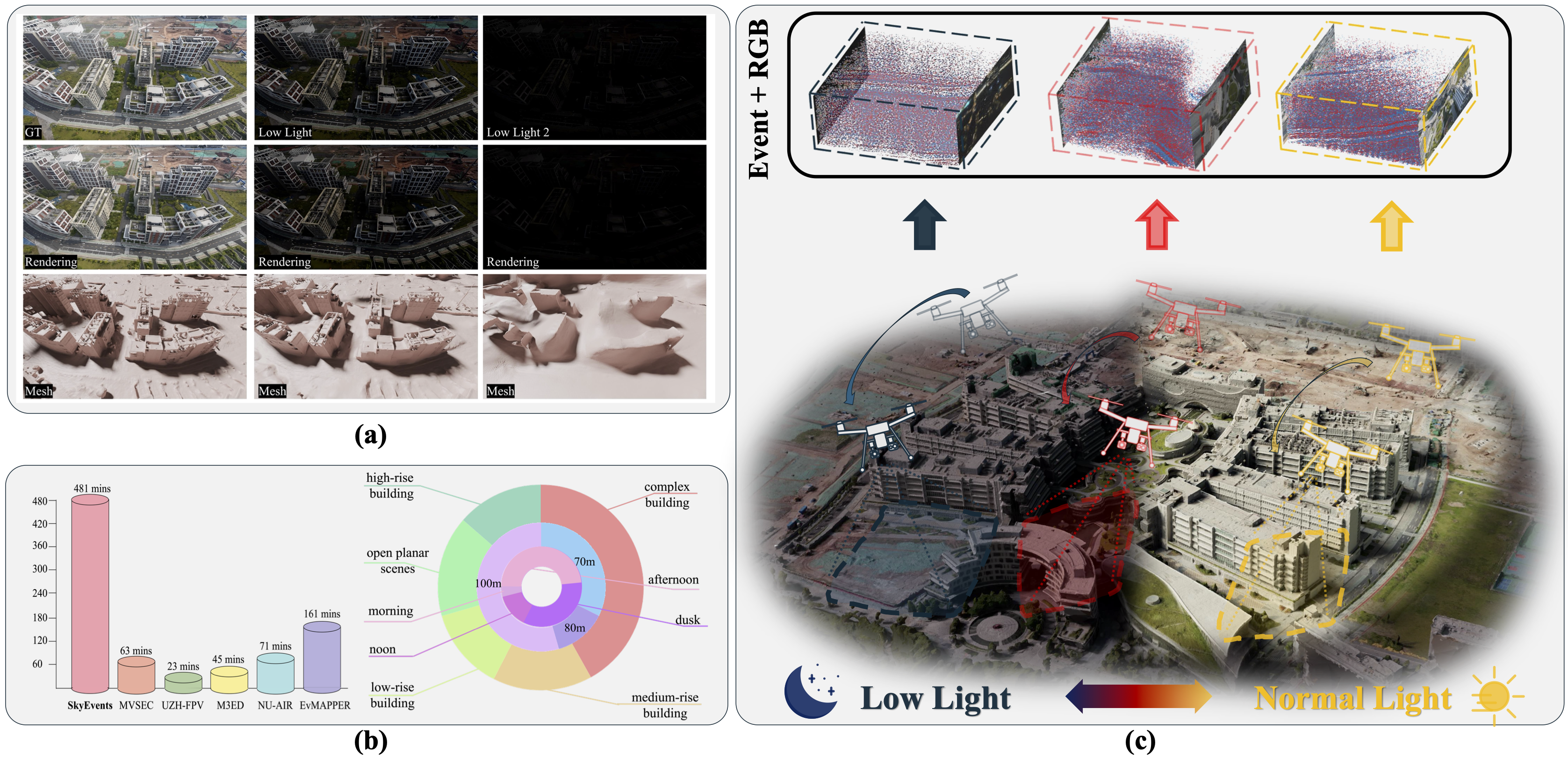

Figure 1: (a) Rendering and mesh under different light conditions. (b) Comparison and statistics of datasets. (c) Dataset collection across varied illumination conditions, scenarios, and flight altitudes.

Under Review at ICLR 2026

Figure 1: (a) Rendering and mesh under different light conditions. (b) Comparison and statistics of datasets. (c) Dataset collection across varied illumination conditions, scenarios, and flight altitudes.

Recent advances in large-scale 3D scene reconstruction using unmanned aerial vehicles (UAVs) have spurred increasing interest in neural rendering techniques. However, existing approaches with conventional cameras struggle to capture consistent multi-view images of scenes, particularly in extremely blurred and low-light environments, due to the inherent limitations in dynamic range caused by long exposure and motion blur resulting from camera motion.

As a promising solution, bio-inspired event cameras exhibit robustness in extreme scenarios, thanks to their high dynamic range and microsecond-level temporal resolution. Nevertheless, dedicated event datasets specifically tailored for large-scale UAV 3D scene reconstruction remain limited. To bridge this gap, we introduce SkyEvents, a pioneering large-scale event-enhanced UAV dataset for 3D scene reconstruction, incorporating RGB, event, and LiDAR data.

SkyEvents encompasses 45 sequences, spanning over 8 hours of video, captured across a diverse set of illumination conditions, scenarios, and flight altitudes. To facilitate the event-based 3D scene reconstruction with SkyEvents, we propose the Geometry-constrained Timestamp Alignment (GTA) module to align timestamps between the event and RGB cameras. Furthermore, we introduce Region-wise Event Rendering (RER) loss for supervising the rendering optimization. With SkyEvents, we aim to motivate and equip researchers to advance large-scale 3D scene reconstruction in challenging environments.

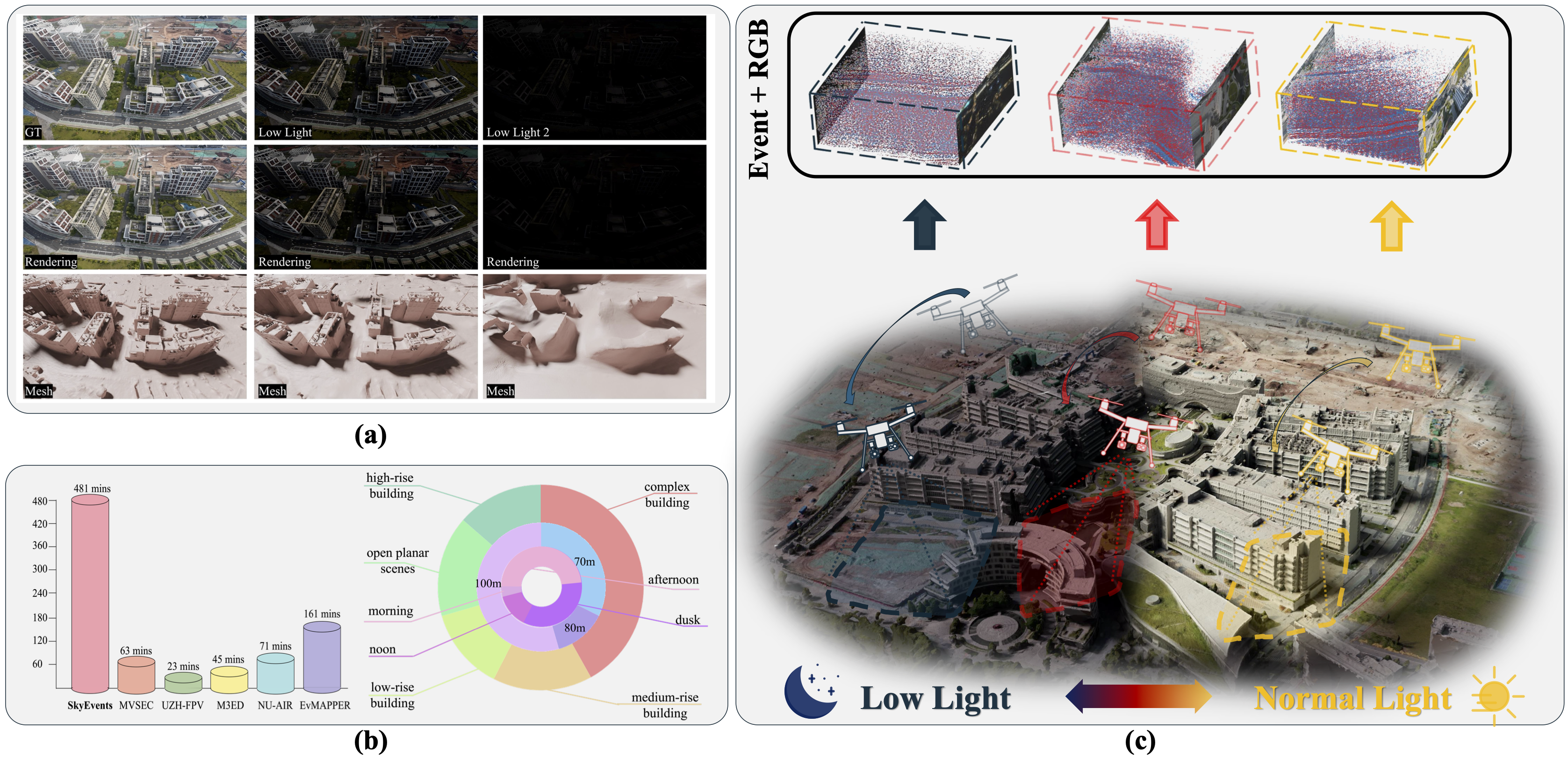

Data statistics: flight path, scenario types, illumination, and flight height distribution.

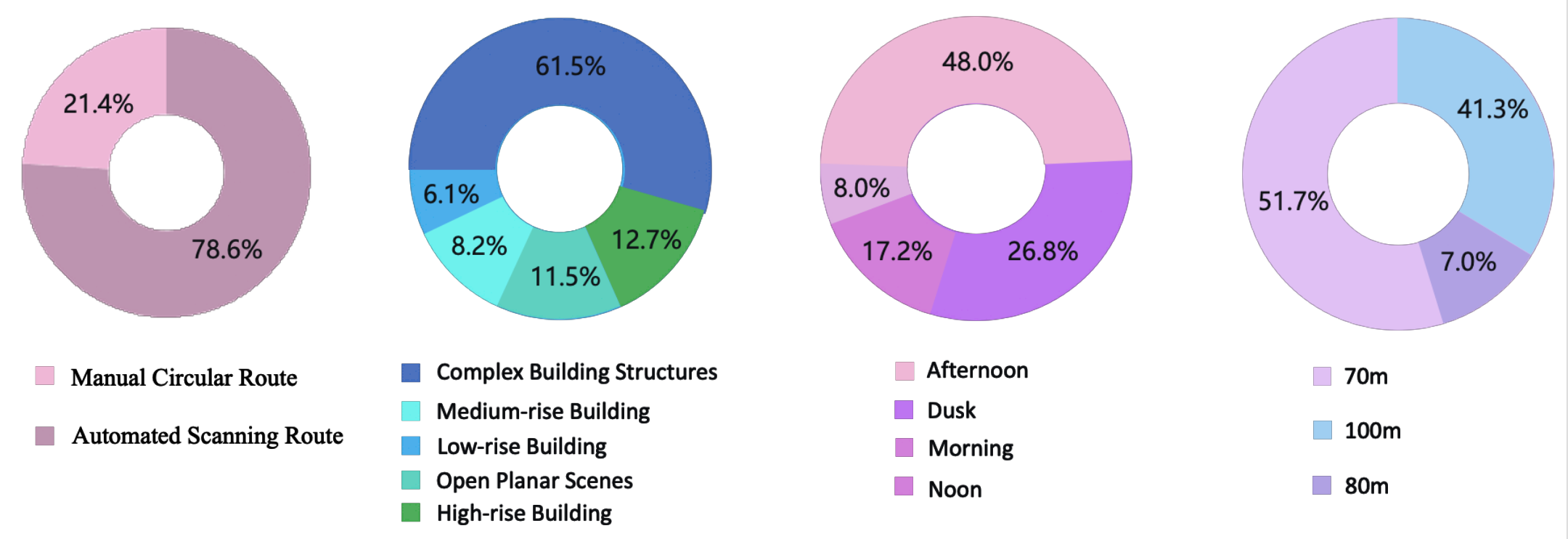

Figure 2: Data collection and rendering pipelines. The platform consists of a UAV payload, Prophesee EVK4 event camera, and DJI RGB camera.

Geometry-Constrained Timestamp Alignment: To address the ~5ms delay between event and RGB streams, we propose GTA. It utilizes a geometry consistency score based on reprojection error to align timestamps with microsecond precision, enabling frame-accurate synchronization.

Region-Wise Event Rendering: We introduce an event-based brightness-change consistency loss. By estimating the warp between sensors and defining a region-aligned supervision, we constrain brightness changes only within overlapping regions, significantly improving rendering in low-light and blurred areas.

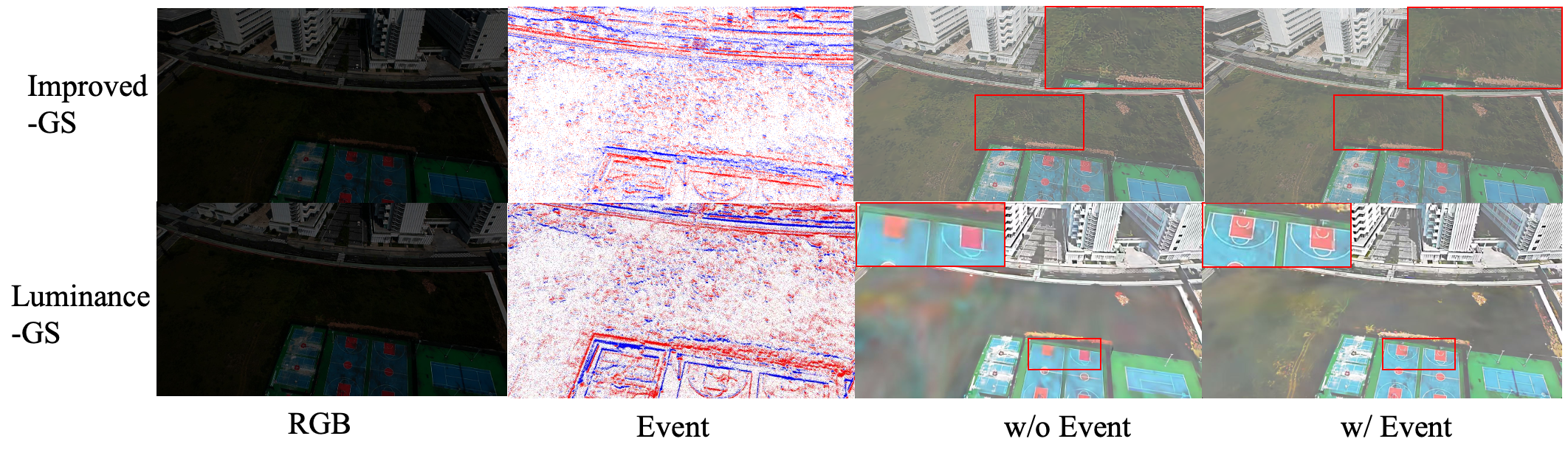

We evaluated our method using state-of-the-art 3DGS pipelines (Luminance-GS and Improved-GS). The integration of event modality markedly enhances rendering quality, particularly in deblurring and low-light recovery.

Comparison of 3D scene reconstruction with and without event enhancement. Note the reduction in artifacts and sharper details in the event-enhanced results.

@inproceedings{skyevents2026,

title={SkyEvents: A Large-Scale Event-Enhanced UAV Dataset for Robust 3D Scene Reconstruction},

author={Anonymous Authors},

booktitle={Under Review at ICLR},

year={2026}

}